Researchers at the Los Alamos National Laboratory have devised a novel method to safeguard neural networks against adversarial attacks that can compromise model predictions and lead them astray.

One of the greatest threats to neural networks comes from adversarial attacks—subtle perturbations in input data that can completely distort a model’s functionality. Such manipulations enable malicious actors to propagate false information while presenting it as credible.

The newly developed technique, Low-Rank Iterative Diffusion (LoRID), leverages generative diffusion processes and tensor decomposition methods to eliminate these perturbations. When tested on widely used datasets, including CIFAR-10, CIFAR-100, Celeb-HQ, and ImageNet, the technology effectively neutralized adversarial influences with high precision.

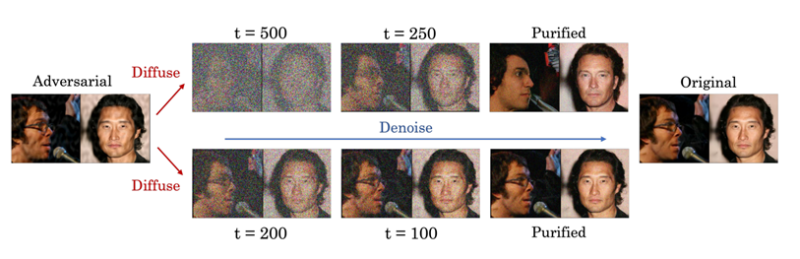

Diffusion models operate by gradually introducing noise into data and subsequently removing it, allowing them to recognize underlying structures and restore the data to its original state. However, overzealous noise removal risks erasing critical details, while insufficient denoising may fail to detect and eliminate adversarial manipulations. LoRID addresses this challenge by performing multiple noise removal iterations at the early stages of the process, preserving essential information while effectively mitigating threats.

A defining strength of LoRID lies in its ability to identify attack-specific patterns that often evade conventional defense mechanisms. These adversarial signatures were successfully eliminated through the use of tensor decomposition techniques.

To evaluate the model’s effectiveness, researchers utilized the Venado supercomputer, an AI-optimized system, which significantly reduced computational overhead. Tasks that would have traditionally required months of processing were completed within mere hours. This acceleration not only expedited the development of the technology but also lowered computational costs while validating its robustness in real-world scenarios.

The findings pave the way for LoRID’s deployment across a wide range of applications, including the protection of national infrastructure. By preprocessing input data before feeding it into machine learning models, the method ensures both integrity and security, reinforcing the reliability of AI-driven systems.