Researchers at Carnegie Mellon University have developed an artificial intelligence algorithm capable of solving complex logical problems almost entirely from scratch—without prior training on vast datasets. This groundbreaking system, named CompressARC, introduces a fundamentally novel approach to information processing.

Unlike conventional neural networks that rely on extensive datasets for training, CompressARC tackles each problem independently, striving to identify its most concise mathematical representation, from which the full solution can then be reconstructed.

At the core of CompressARC lies a specialized decoder neural network. In contrast to traditional transformer-based architectures—such as those powering modern language models like ChatGPT—this network does not encode information. Instead, its sole function is to reconstruct solutions from a compact representation. To achieve this, it utilizes a “residual stream” mechanism, which sequentially preserves intermediate results at each stage of data processing and refines them to enhance the final answer.

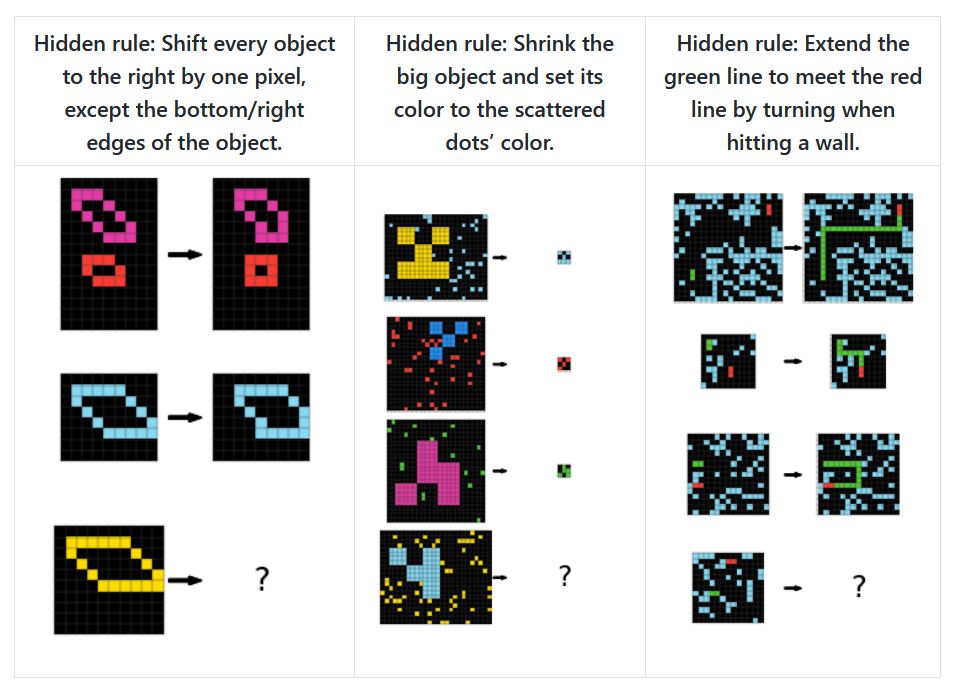

To assess the efficacy of this novel approach, doctoral student Isaac Liao and Professor Albert Gu selected one of the most formidable AI benchmarks: the ARC-AGI dataset, created in 2019 by machine learning expert François Chollet. This dataset consists of visual puzzles, where an AI must infer a pattern from a few examples and apply it to a new scenario.

A typical puzzle presents a grid divided by light-blue lines, with a specific rule governing how cells should be colored. The corners must be black, the central cell purple, and the remaining cells colored based on their relative position to the center: red for the upper section, blue for the lower, green for the right, and yellow for the left. While seemingly simple, such puzzles demand sophisticated AI capabilities, including spatial reasoning, pattern recognition, and rule generalization to unseen scenarios.

CompressARC employs gradient descent, a mathematical optimization method that iteratively refines solutions by making incremental adjustments. The AI “feels its way” through the solution space, tweaking parameters, observing their effects, and progressing toward an optimal outcome. However, what sets CompressARC apart from other systems is that it does not rely on precomputed answer choices. Instead, it seeks the most compressed representation of the puzzle—a kind of formula that can be universally applied to similar problems.

The results of CompressARC’s evaluation are striking: it correctly solves 34.75% of the training set puzzles and 20% of entirely new challenges. While these figures fall short of the latest OpenAI model, o3 (which achieves 75.7% accuracy under time constraints and 87.5% with unlimited time), CompressARC holds a major advantage—it completes all computations on a standard RTX 4070 gaming GPU in just 20 minutes, whereas o3 demands extensive server resources and far greater processing time.

When we compress data, we inherently seek patterns and structures—just as the human brain extracts meaning from the world. This principle is embodied in two foundational concepts: Kolmogorov complexity (which defines the shortest possible program capable of producing a given output) and Solomonoff induction (which identifies the optimal method for predicting future data based on observed patterns). An algorithm that excels at efficiently compressing information must understand its structure and uncover hidden patterns—capabilities often regarded as hallmarks of intelligence.

Research in this domain has already yielded surprising breakthroughs. In September 2023, a team at DeepMind discovered that their Chinchilla 70B language model outperformed specialized compression algorithms: it reduced image fragment sizes to 43.4% of their original form (compared to PNG’s 58.5%) and compressed audio files to 16.4% (whereas FLAC achieves 30.3%).

Of course, CompressARC has its limitations. While it excels in color distribution, gap-filling, and pixel adjacency analysis, it struggles with counting, recognizing distant patterns, rotations, and reflections.

Skeptics argue that CompressARC’s success may stem from exploiting mathematical properties inherent to ARC puzzles, such as their rigid geometric structure and constrained transformation sets. If so, this approach might be less effective on more complex or less-structured datasets.

Nevertheless, this breakthrough could mark a pivotal moment in AI research. Instead of merely increasing computational power and data volume, researchers are shifting their focus toward how machines process and organize information. This paradigm shift not only conserves resources but also brings us closer to unraveling the very essence of cognition itself.